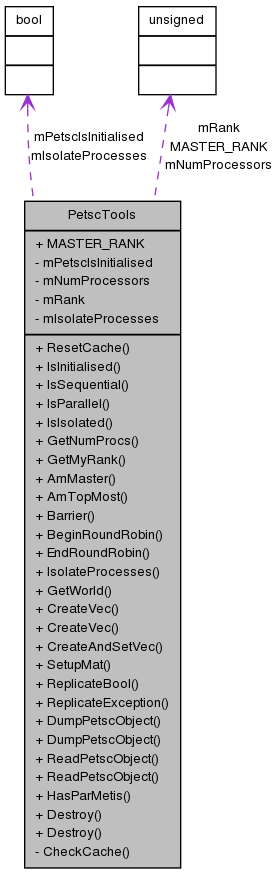

PetscTools Class Reference

#include <PetscTools.hpp>

Static Public Member Functions | |

| static void | ResetCache () |

| static bool | IsInitialised () |

| static bool | IsSequential () |

| static bool | IsParallel () |

| static bool | IsIsolated () |

| static unsigned | GetNumProcs () |

| static unsigned | GetMyRank () |

| static bool | AmMaster () |

| static bool | AmTopMost () |

| static void | Barrier (const std::string callerId="") |

| static void | BeginRoundRobin () |

| static void | EndRoundRobin () |

| static void | IsolateProcesses (bool isolate=true) |

| static MPI_Comm | GetWorld () |

| static Vec | CreateVec (int size, int localSize=PETSC_DECIDE, bool ignoreOffProcEntries=true) |

| static Vec | CreateVec (std::vector< double > data) |

| static Vec | CreateAndSetVec (int size, double value) |

| static void | SetupMat (Mat &rMat, int numRows, int numColumns, unsigned rowPreallocation, int numLocalRows=PETSC_DECIDE, int numLocalColumns=PETSC_DECIDE, bool ignoreOffProcEntries=true, bool newAllocationError=true) |

| static bool | ReplicateBool (bool flag) |

| static void | ReplicateException (bool flag) |

| static void | DumpPetscObject (const Mat &rMat, const std::string &rOutputFileFullPath) |

| static void | DumpPetscObject (const Vec &rVec, const std::string &rOutputFileFullPath) |

| static void | ReadPetscObject (Mat &rMat, const std::string &rOutputFileFullPath, Vec rParallelLayout=NULL) |

| static void | ReadPetscObject (Vec &rVec, const std::string &rOutputFileFullPath, Vec rParallelLayout=NULL) |

| static bool | HasParMetis () |

| static void | Destroy (Vec &rVec) |

| static void | Destroy (Mat &rMat) |

Static Public Attributes | |

| static const unsigned | MASTER_RANK = 0 |

Static Private Member Functions | |

| static void | CheckCache () |

Static Private Attributes | |

| static bool | mPetscIsInitialised = false |

| static unsigned | mNumProcessors = 0 |

| static unsigned | mRank = 0 |

| static bool | mIsolateProcesses = false |

Detailed Description

A helper class of static methods.

Any PETSc operation that can be performed using the methods in this class, should be.

This ensures a consistent interface in Chaste even when PETSc arguments change between PETSc versions. For example VecDestroy takes different arguments in 3.2, and using PetscTools::Destroy(vec); takes care of this.

Definition at line 110 of file PetscTools.hpp.

Member Function Documentation

| bool PetscTools::AmMaster | ( | ) | [static] |

- Returns:

- whether it is the master process or not.

If not running in parallel, or if IsolateProcesses has been called, always returns true.

Definition at line 120 of file PetscTools.cpp.

References CheckCache(), MASTER_RANK, mIsolateProcesses, and mRank.

Referenced by NodeBasedCellPopulation< DIM >::AddReceivedCells(), NodeBasedCellPopulation< DIM >::AddReceivedHaloCells(), ParallelColumnDataWriter::AdvanceAlongUnlimitedDimension(), ArchiveOpener< Archive, Stream >::ArchiveOpener(), ParallelColumnDataWriter::Close(), CellBasedPdeHandler< DIM >::CloseResultsFiles(), OutputFileHandler::CommonConstructor(), NumericFileComparison::CompareFiles(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ComputeMeshPartitioning(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructCuboid(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructLinearMesh(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructRectangularMesh(), AbstractConvergenceTester< CELL, CARDIAC_PROBLEM, DIM, PROBLEM_DIM >::Converge(), CellMLToSharedLibraryConverter::ConvertCellmlToSo(), OutputFileHandler::CopyFileTo(), OutputDirectoryFifoQueue::CreateNextDir(), CellMLToSharedLibraryConverter::CreateOptionsFile(), CardiacSimulation::CreateResumeXmlFile(), AbstractConvergenceTester< CELL, CARDIAC_PROBLEM, DIM, PROBLEM_DIM >::DisplayRun(), ParallelColumnDataWriter::EndDefineMode(), NodesOnlyMesh< SPACE_DIM >::EnlargeBoxCollection(), ActivationOutputModifier::FinaliseAtEnd(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::GetNextBoundaryElement(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::GetNextCableElement(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::GetNextElement(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::GetNextNode(), NodeBasedCellPopulation< DIM >::GetReceivedCells(), Hdf5ToTxtConverter< ELEMENT_DIM, SPACE_DIM >::Hdf5ToTxtConverter(), GenericEventHandler< 11, MeshEventHandler >::HeadingsImpl(), AbstractBidomainSolver< ELEMENT_DIM, SPACE_DIM >::InitialiseForSolve(), DistributedBoxCollection< DIM >::LoadBalance(), OutputFileHandler::MakeFoldersAndReturnFullPath(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::MetisLibraryPartitioning(), NodeBasedCellPopulation< DIM >::NonBlockingSendCellsToNeighbourProcesses(), CellBasedPdeHandler< DIM >::OpenResultsFiles(), AbstractCellBasedSimulation< ELEMENT_DIM, SPACE_DIM >::OutputSimulationSetup(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning(), StreeterFibreGenerator< SPACE_DIM >::PreWriteCalculations(), ExecutableSupport::Print(), Citations::Print(), ExecutableSupport::PrintError(), ProgressReporter::PrintFinalising(), ProgressReporter::PrintInitialising(), ProgressReporter::ProgressReporter(), Hdf5DataWriter::PutUnlimitedVariable(), ParallelColumnDataWriter::PutVariable(), ParallelColumnDataWriter::PutVector(), GenericEventHandler< 11, MeshEventHandler >::ReportImpl(), AbstractFileComparison::ResetFiles(), AbstractTetrahedralMesh< SPACE_DIM, SPACE_DIM >::save(), HeartConfig::save(), CardiacSimulationArchiver< PROBLEM_CLASS >::Save(), NodeBasedCellPopulation< DIM >::SendCellsToNeighbourProcesses(), AbstractFileComparison::Setup(), DistributedBoxCollection< DIM >::SetupHaloBoxes(), ExecutableSupport::ShowCopyright(), AbstractFileComparison::SkipHeaderLines(), LinearSystem::Solve(), AbstractCellBasedSimulation< ELEMENT_DIM, SPACE_DIM >::Solve(), ProgressReporter::Update(), CellProliferativeTypesCountWriter< ELEMENT_DIM, SPACE_DIM >::Visit(), CellProliferativePhasesCountWriter< ELEMENT_DIM, SPACE_DIM >::Visit(), CellMutationStatesCountWriter< ELEMENT_DIM, SPACE_DIM >::Visit(), CellProliferativeTypesCountWriter< ELEMENT_DIM, SPACE_DIM >::VisitAnyPopulation(), CellProliferativePhasesCountWriter< ELEMENT_DIM, SPACE_DIM >::VisitAnyPopulation(), CellMutationStatesCountWriter< ELEMENT_DIM, SPACE_DIM >::VisitAnyPopulation(), Hdf5ToMeshalyzerConverter< ELEMENT_DIM, SPACE_DIM >::Write(), HeartConfig::Write(), Hdf5ToCmguiConverter< ELEMENT_DIM, SPACE_DIM >::Write(), Hdf5ToCmguiConverter< ELEMENT_DIM, SPACE_DIM >::WriteCmguiScript(), AbstractContinuumMechanicsSolver< DIM >::WriteCurrentPressureSolution(), AbstractContinuumMechanicsSolver< DIM >::WriteCurrentSpatialSolution(), AbstractPerElementWriter< SPACE_DIM, SPACE_DIM, SPACE_DIM *SPACE_DIM >::WriteData(), XdmfMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFiles(), XdmfMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), VtkMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), AbstractMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMeshReader(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingParallelMesh(), PostProcessingWriter< ELEMENT_DIM, SPACE_DIM >::WriteGenericFileToMeshalyzer(), CellMutationStatesCountWriter< ELEMENT_DIM, SPACE_DIM >::WriteHeader(), MonodomainProblem< ELEMENT_DIM, SPACE_DIM >::WriteInfo(), ExtendedBidomainProblem< DIM >::WriteInfo(), BidomainProblem< DIM >::WriteInfo(), AbstractHdf5Converter< ELEMENT_DIM, SPACE_DIM >::WriteInfoFile(), HeartGeometryInformation< SPACE_DIM >::WriteLayerForEachNode(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteNclFile(), CellBasedPdeHandler< DIM >::WritePdeSolution(), PseudoEcgCalculator< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::WritePseudoEcg(), AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::WriteResultsToFiles(), OdeSolution::WriteToFile(), PostProcessingWriter< ELEMENT_DIM, SPACE_DIM >::WriteVariablesOverTimeAtNodes(), OffLatticeSimulation< ELEMENT_DIM, SPACE_DIM >::WriteVisualizerSetupFile(), XdmfMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteXdmfMasterFile(), LinearSystem::~LinearSystem(), and ProgressReporter::~ProgressReporter().

| bool PetscTools::AmTopMost | ( | ) | [static] |

- Returns:

- whether it is the right-most process or not.

If not running in parallel, or if IsolateProcesses has been called, always returns true.

Definition at line 126 of file PetscTools.cpp.

References CheckCache(), mIsolateProcesses, mNumProcessors, and mRank.

Referenced by NodeBasedCellPopulation< DIM >::AddReceivedCells(), NodeBasedCellPopulation< DIM >::AddReceivedHaloCells(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructFromMeshReader(), NodesOnlyMesh< SPACE_DIM >::EnlargeBoxCollection(), NodeBasedCellPopulation< DIM >::GetReceivedCells(), DistributedBoxCollection< DIM >::LoadBalance(), NodeBasedCellPopulation< DIM >::NonBlockingSendCellsToNeighbourProcesses(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning(), NodeBasedCellPopulation< DIM >::SendCellsToNeighbourProcesses(), DistributedBoxCollection< DIM >::SetupHaloBoxes(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingParallelMesh(), PostProcessingWriter< ELEMENT_DIM, SPACE_DIM >::WriteGenericFileToMeshalyzer(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteNclFile(), and AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::WriteResultsToFiles().

| void PetscTools::Barrier | ( | const std::string | callerId = "" |

) | [static] |

If MPI is set up, perform a barrier synchronisation. If not, or if IsolateProcesses has been called, it's a noop.

- Parameters:

-

callerId only used in debug mode; printed before & after the barrier call

Definition at line 134 of file PetscTools.cpp.

References CheckCache(), GetMyRank(), mIsolateProcesses, and mPetscIsInitialised.

Referenced by ParallelColumnDataWriter::AdvanceAlongUnlimitedDimension(), GenericEventHandler< 11, MeshEventHandler >::BeginEventImpl(), BeginRoundRobin(), ElectrodesStimulusFactory< DIM >::CheckForElectrodesIntersection(), ParallelColumnDataWriter::Close(), OutputFileHandler::CommonConstructor(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ComputeMeshPartitioning(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructCuboid(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructFromMeshReader(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructLinearMesh(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructRectangularMesh(), OutputFileHandler::CopyFileTo(), OutputDirectoryFifoQueue::CreateNextDir(), CellMLToSharedLibraryConverter::CreateOptionsFile(), GenericEventHandler< 11, MeshEventHandler >::EndEventImpl(), EndRoundRobin(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::GeometricPartitioning(), Hdf5ToCmguiConverter< ELEMENT_DIM, SPACE_DIM >::Hdf5ToCmguiConverter(), Hdf5ToMeshalyzerConverter< ELEMENT_DIM, SPACE_DIM >::Hdf5ToMeshalyzerConverter(), GenericEventHandler< 11, MeshEventHandler >::HeadingsImpl(), AbstractCardiacProblem< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::InitialiseWriter(), OutputFileHandler::MakeFoldersAndReturnFullPath(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning(), ExtendedBidomainProblem< DIM >::ProcessExtracellularStimulus(), GenericEventHandler< 11, MeshEventHandler >::ReportImpl(), AbstractTetrahedralMesh< SPACE_DIM, SPACE_DIM >::save(), HeartConfig::save(), CardiacSimulationArchiver< PROBLEM_CLASS >::Save(), AbstractFileComparison::Setup(), CardiacElectroMechanicsProblem< DIM, ELEC_PROB_DIM >::Solve(), NodeBasedCellPopulation< DIM >::Update(), AbstractContinuumMechanicsSolver< DIM >::WriteCurrentPressureSolution(), AbstractContinuumMechanicsSolver< DIM >::WriteCurrentSpatialSolution(), XdmfMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingParallelMesh(), HeartGeometryInformation< SPACE_DIM >::WriteLayerForEachNode(), and AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteNclFile().

| void PetscTools::BeginRoundRobin | ( | ) | [static] |

Call at the start of a block of code that should be executed by each process in turn.

Note that this is not reliable for printing output to stdout in an ordered fashion, since on some systems each process may have a separate stdout buffer, and there's no way to force a flush to the underlying output stream. See e.g. http://stackoverflow.com/questions/5182045/openmpi-mpi-barrier-problems for more info.

Definition at line 149 of file PetscTools.cpp.

References Barrier(), and GetMyRank().

Referenced by ActivationOutputModifier::FinaliseAtEnd(), GenericEventHandler< 11, MeshEventHandler >::ReportImpl(), ExecutableSupport::ShowParallelLaunching(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingParallelMesh(), PostProcessingWriter< ELEMENT_DIM, SPACE_DIM >::WriteGenericFileToMeshalyzer(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteNclFile(), and AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::WriteResultsToFiles().

| static void PetscTools::CheckCache | ( | ) | [inline, static, private] |

Private method makes sure that (if this is the first use within a test) then PETSc has been probed.

Definition at line 127 of file PetscTools.hpp.

References mNumProcessors, and ResetCache().

Referenced by AmMaster(), AmTopMost(), Barrier(), GetMyRank(), GetNumProcs(), IsInitialised(), IsParallel(), IsSequential(), and ReplicateBool().

Create a vector of the specified size with all values set to be the given constant. SetFromOptions is called.

- Parameters:

-

size the size of the vector value the value to set each entry

- Returns:

- new PETSc vector

Definition at line 253 of file PetscTools.cpp.

References CreateVec().

Referenced by OdeLinearSystemSolver::OdeLinearSystemSolver(), NodeBasedCellPopulationWithBuskeUpdate< DIM >::UpdateNodeLocations(), and AbstractNonlinearAssemblerSolverHybrid< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::VerifyJacobian().

Create a Vec from the given data.

- Parameters:

-

data some data

- Returns:

- new PETSc vector

Definition at line 233 of file PetscTools.cpp.

References CreateVec().

| Vec PetscTools::CreateVec | ( | int | size, | |

| int | localSize = PETSC_DECIDE, |

|||

| bool | ignoreOffProcEntries = true | |||

| ) | [static] |

Create a vector of the specified size. SetFromOptions is called.

- Parameters:

-

size the size of the vector localSize the local number of items owned by this process ignoreOffProcEntries whether to ignore entries destined to be stored on a separate processor when assembling (eliminates global reductions).

- Returns:

- new PETSc vector

Definition at line 213 of file PetscTools.cpp.

Referenced by AbstractNonlinearAssemblerSolverHybrid< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::ComputeJacobianNumerically(), PseudoEcgCalculator< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::ComputePseudoEcgAtOneTimeStep(), CreateAndSetVec(), CreateVec(), PetscMatTools::GetMatrixRowDistributed(), LinearSystem::LinearSystem(), PCBlockDiagonal::PCBlockDiagonalCreate(), PCLDUFactorisation::PCLDUFactorisationCreate(), PCTwoLevelsBlockDiagonal::PCTwoLevelsBlockDiagonalCreate(), Hdf5DataWriter::PutStripedVector(), Hdf5DataWriter::PutVector(), PetscVecTools::SetupInterleavedVectorScatterGather(), and VoltageInterpolaterOntoMechanicsMesh< DIM >::VoltageInterpolaterOntoMechanicsMesh().

| static void PetscTools::Destroy | ( | Mat & | rMat | ) | [inline, static] |

Destroy method Note that PETSc 3.1 and previous destroy based on a PETSc object but PETSc 3.2 and later destroy based on a pointer to a PETSc object

- Parameters:

-

rMat a reference to the PETSc object

Definition at line 367 of file PetscTools.hpp.

| static void PetscTools::Destroy | ( | Vec & | rVec | ) | [inline, static] |

Destroy method Note that PETSc 3.1 and previous destroy based on a PETSc object but PETSc 3.2 and later destroy based on a pointer to a PETSc object

- Parameters:

-

rVec a reference to the PETSc object

Definition at line 351 of file PetscTools.hpp.

Referenced by AbstractContinuumMechanicsSolver< DIM >::AllocateMatrixMemory(), AbstractContinuumMechanicsSolver< DIM >::ApplyDirichletBoundaryConditions(), BoundaryConditionsContainer< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::ApplyDirichletToLinearProblem(), PetscMatTools::CheckEquality(), PetscMatTools::CheckSymmetry(), AbstractNonlinearAssemblerSolverHybrid< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::ComputeJacobianNumerically(), PseudoEcgCalculator< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::ComputePseudoEcgAtOneTimeStep(), PdeAndBoundaryConditions< DIM >::DestroySolution(), DistributedVectorFactory::DistributedVectorFactory(), AbstractExtendedBidomainSolver< ELEMENT_DIM, SPACE_DIM >::FinaliseLinearSystem(), AbstractBidomainSolver< ELEMENT_DIM, SPACE_DIM >::FinaliseLinearSystem(), HasParMetis(), Hdf5ToTxtConverter< ELEMENT_DIM, SPACE_DIM >::Hdf5ToTxtConverter(), Hdf5ToVtkConverter< ELEMENT_DIM, SPACE_DIM >::Hdf5ToVtkConverter(), AbstractCardiacProblem< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::Initialise(), AbstractLinearPdeSolver< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::InitialiseForSolve(), ExtendedBidomainProblem< DIM >::load(), AbstractCardiacProblem< DIM, DIM, 1 >::load(), PCBlockDiagonal::PCBlockDiagonalCreate(), PCLDUFactorisation::PCLDUFactorisationCreate(), PCTwoLevelsBlockDiagonal::PCTwoLevelsBlockDiagonalCreate(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning(), Hdf5DataWriter::PutStripedVector(), Hdf5DataWriter::PutVector(), ParallelColumnDataWriter::PutVectorStripe(), ReadPetscObject(), ReplicatableVector::RemovePetscContext(), ReplicatableVector::Replicate(), PetscVecTools::SetupInterleavedVectorScatterGather(), AbstractDynamicLinearPdeSolver< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::Solve(), SimplePetscNonlinearSolver::Solve(), SimpleNewtonNonlinearSolver::Solve(), LinearSystem::Solve(), CardiacElectroMechanicsProblem< DIM, ELEC_PROB_DIM >::Solve(), AbstractCardiacProblem< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::Solve(), StokesFlowSolver< DIM >::Solve(), LinearParabolicPdeSystemWithCoupledOdeSystemSolver< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::SolveAndWriteResultsToFile(), OdeLinearSystemSolver::SolveOneTimeStep(), CellBasedPdeHandler< DIM >::SolvePdeAndWriteResultsToFile(), AbstractNonlinearElasticitySolver< DIM >::SolveSnes(), AbstractNonlinearElasticitySolver< DIM >::TakeNewtonStep(), NodeBasedCellPopulationWithBuskeUpdate< DIM >::UpdateNodeLocations(), AbstractNonlinearAssemblerSolverHybrid< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::VerifyJacobian(), VoltageInterpolaterOntoMechanicsMesh< DIM >::VoltageInterpolaterOntoMechanicsMesh(), Hdf5ToMeshalyzerConverter< ELEMENT_DIM, SPACE_DIM >::Write(), Hdf5ToCmguiConverter< ELEMENT_DIM, SPACE_DIM >::Write(), AbstractCardiacProblem< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::WriteExtraVariablesOneStep(), ExtendedBidomainProblem< DIM >::WriteOneStep(), PostProcessingWriter< ELEMENT_DIM, SPACE_DIM >::WriteOutputDataToHdf5(), AbstractCardiacProblem< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::~AbstractCardiacProblem(), AbstractContinuumMechanicsSolver< DIM >::~AbstractContinuumMechanicsSolver(), ExtendedBidomainSolver< ELEM_DIM, SPACE_DIM >::~ExtendedBidomainSolver(), Hdf5DataWriter::~Hdf5DataWriter(), LinearSystem::~LinearSystem(), MonodomainSolver< ELEMENT_DIM, SPACE_DIM >::~MonodomainSolver(), OdeLinearSystemSolver::~OdeLinearSystemSolver(), OperatorSplittingMonodomainSolver< ELEMENT_DIM, SPACE_DIM >::~OperatorSplittingMonodomainSolver(), ParallelColumnDataWriter::~ParallelColumnDataWriter(), and PCTwoLevelsBlockDiagonal::~PCTwoLevelsBlockDiagonal().

| void PetscTools::DumpPetscObject | ( | const Vec & | rVec, | |

| const std::string & | rOutputFileFullPath | |||

| ) | [static] |

Dumps a given PETSc object to disk.

- Parameters:

-

rVec a vector rOutputFileFullPath where to dump the vector to disk

Definition at line 346 of file PetscTools.cpp.

References PETSC_DESTROY_PARAM.

| void PetscTools::DumpPetscObject | ( | const Mat & | rMat, | |

| const std::string & | rOutputFileFullPath | |||

| ) | [static] |

Dumps a given PETSc object to disk.

- Parameters:

-

rMat a matrix rOutputFileFullPath where to dump the matrix to disk

Definition at line 332 of file PetscTools.cpp.

References PETSC_DESTROY_PARAM.

| void PetscTools::EndRoundRobin | ( | ) | [static] |

Call at the end of a block of code that should be executed by each process in turn.

Definition at line 159 of file PetscTools.cpp.

References Barrier(), GetMyRank(), and GetNumProcs().

Referenced by ActivationOutputModifier::FinaliseAtEnd(), GenericEventHandler< 11, MeshEventHandler >::ReportImpl(), ExecutableSupport::ShowParallelLaunching(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingParallelMesh(), PostProcessingWriter< ELEMENT_DIM, SPACE_DIM >::WriteGenericFileToMeshalyzer(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteNclFile(), and AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::WriteResultsToFiles().

| unsigned PetscTools::GetMyRank | ( | ) | [static] |

- Returns:

- our rank.

If PETSc has not been initialized, returns 0.

Definition at line 114 of file PetscTools.cpp.

References CheckCache(), and mRank.

Referenced by VtkMeshWriter< ELEMENT_DIM, SPACE_DIM >::AddPointData(), ArchiveOpener< Archive, Stream >::ArchiveOpener(), IncompressibleNonlinearElasticitySolver< DIM >::AssembleSystem(), CompressibleNonlinearElasticitySolver< DIM >::AssembleSystem(), CellId::AssignCellId(), Barrier(), BeginRoundRobin(), NodesOnlyMesh< SPACE_DIM >::CalculateNodesOutsideLocalDomain(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructFromMeshReader(), AbstractContinuumMechanicsAssembler< DIM, CAN_ASSEMBLE_VECTOR, CAN_ASSEMBLE_MATRIX >::DoAssemble(), EndRoundRobin(), FormDebugHead(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::GeometricPartitioning(), MixedDimensionMesh< ELEMENT_DIM, SPACE_DIM >::GetCableElement(), AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::GetCardiacCellOrHaloCell(), NodesOnlyMesh< SPACE_DIM >::GetMaximumNodeIndex(), NodesOnlyMesh< SPACE_DIM >::GetNextAvailableIndex(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::GetNodeOrHaloNode(), DistributedBoxCollection< DIM >::GetProcessOwningNode(), DistributedBoxCollection< DIM >::LoadBalance(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::MetisLibraryPartitioning(), CardiacSimulationArchiver< PROBLEM_CLASS >::Migrate(), NodeBasedCellPopulation< DIM >::NonBlockingSendCellsToNeighbourProcesses(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ParMetisLibraryNodeAndElementPartitioning(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning(), Timer::Print(), ExecutableSupport::PrintError(), TetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ReadNodesPerProcessorFile(), GenericEventHandler< 11, MeshEventHandler >::ReportImpl(), NodeBasedCellPopulation< DIM >::SendCellsToNeighbourProcesses(), ExecutableSupport::ShowParallelLaunching(), StokesFlowSolver< DIM >::Solve(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::SolveBoundaryElementMapping(), AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::SolveCellSystems(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::SolveElementMapping(), NodesOnlyMesh< SPACE_DIM >::SolveNodeMapping(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::SolveNodeMapping(), AbstractNonlinearElasticitySolver< DIM >::TakeNewtonStep(), DistanceMapCalculator< ELEMENT_DIM, SPACE_DIM >::UpdateQueueFromRemote(), XdmfMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), VtkMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingParallelMesh(), ExecutableSupport::WriteMachineInfoFile(), ExecutableSupport::WriteProvenanceInfoFile(), NodeBasedCellPopulationWithParticles< DIM >::WriteVtkResultsToFile(), and NodeBasedCellPopulation< DIM >::WriteVtkResultsToFile().

| unsigned PetscTools::GetNumProcs | ( | ) | [static] |

- Returns:

- total number of processors.

Definition at line 108 of file PetscTools.cpp.

References CheckCache(), and mNumProcessors.

Referenced by VtkMeshWriter< ELEMENT_DIM, SPACE_DIM >::AddPointData(), CellId::AssignCellId(), AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::CalculateHaloNodesFromNodeExchange(), AbstractTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::CalculateNodeExchange(), DistanceMapCalculator< ELEMENT_DIM, SPACE_DIM >::ComputeDistanceMap(), DistanceMapCalculator< ELEMENT_DIM, SPACE_DIM >::DistanceMapCalculator(), DistributedBoxCollection< DIM >::DistributedBoxCollection(), EndRoundRobin(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::GeometricPartitioning(), NodesOnlyMesh< SPACE_DIM >::GetMaximumNodeIndex(), NodesOnlyMesh< SPACE_DIM >::GetNextAvailableIndex(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::MetisLibraryPartitioning(), CardiacSimulationArchiver< PROBLEM_CLASS >::Migrate(), ObjectCommunicator< CLASS >::ObjectCommunicator(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ParMetisLibraryNodeAndElementPartitioning(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning(), TetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ReadNodesPerProcessorFile(), GenericEventHandler< 11, MeshEventHandler >::ReportImpl(), DistributedVectorFactory::rGetGlobalLows(), CardiacSimulationArchiver< PROBLEM_CLASS >::Save(), AbstractMesh< ELEMENT_DIM, SPACE_DIM >::SetDistributedVectorFactory(), ExecutableSupport::ShowParallelLaunching(), AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::SolveCellSystems(), DistanceMapCalculator< ELEMENT_DIM, SPACE_DIM >::UpdateQueueFromRemote(), DistanceMapCalculator< ELEMENT_DIM, SPACE_DIM >::WorkOnLocalQueue(), XdmfMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), VtkMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingParallelMesh(), and ExecutableSupport::WriteMachineInfoFile().

| MPI_Comm PetscTools::GetWorld | ( | ) | [static] |

Get the MPI Communicator representing the whole set of running processes. This will normally be PETSC_COMM_WORLD, unless IsolateProcesses has been called, in which case it will be PETSC_COMM_SELF.

- Returns:

- the MPI Communicator representing the whole set of running processes

Definition at line 173 of file PetscTools.cpp.

References mIsolateProcesses.

Referenced by AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::GetCellCyclePhaseCount(), AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::GetCellMutationStateCount(), AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::GetCellProliferativeTypeCount(), NodeBasedCellPopulation< DIM >::GetSizeOfCellPopulation(), NodesOnlyMesh< SPACE_DIM >::GetWidth(), ObjectCommunicator< CLASS >::IRecvObject(), NodesOnlyMesh< SPACE_DIM >::IsANodeCloseToDomainBoundary(), ObjectCommunicator< CLASS >::ISendObject(), ObjectCommunicator< CLASS >::RecvObject(), GenericEventHandler< 11, MeshEventHandler >::ReportImpl(), ObjectCommunicator< CLASS >::SendObject(), and ObjectCommunicator< CLASS >::SendRecvObject().

| bool PetscTools::HasParMetis | ( | ) | [static] |

Checks if PETSc has been configured with ParMetis partioning support.

- Returns:

- true If ParMetis partitioning (via PETSc) is available

Definition at line 444 of file PetscTools.cpp.

References Destroy(), PETSC_DESTROY_PARAM, and SetupMat().

Referenced by NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning().

| bool PetscTools::IsInitialised | ( | ) | [static] |

- Returns:

- whether PETSc has been initialised yet.

Definition at line 85 of file PetscTools.cpp.

References CheckCache(), and mPetscIsInitialised.

| bool PetscTools::IsIsolated | ( | ) | [static] |

- Returns:

- whether this process is isolated, i.e. IsolateProcesses has been called.

Definition at line 103 of file PetscTools.cpp.

References mIsolateProcesses.

Referenced by GenericEventHandler< 11, MeshEventHandler >::ReportImpl().

| void PetscTools::IsolateProcesses | ( | bool | isolate = true |

) | [static] |

Where work can be split between isolated processes, it would be nice to be able to do so easily without worrying about collective calls made inside classes such as OutputFileHandler leading to deadlock. This method attempts to enable this behaviour. If the flag is set then AmMaster and AmTopMost always return true, Barrier becomes a no-op, and ReplicateBool doesn't replicate.

- Parameters:

-

isolate whether to consider processes as isolated

Definition at line 168 of file PetscTools.cpp.

References mIsolateProcesses.

| bool PetscTools::IsParallel | ( | ) | [static] |

- Returns:

- whether there is more than one process.

Definition at line 97 of file PetscTools.cpp.

References CheckCache(), and mNumProcessors.

Referenced by DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ComputeMeshPartitioning(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructFromMeshReader(), FormDebugHead(), AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::GetCellCyclePhaseCount(), AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::GetCellMutationStateCount(), AbstractCellPopulation< ELEMENT_DIM, SPACE_DIM >::GetCellProliferativeTypeCount(), AbstractMesh< ELEMENT_DIM, SPACE_DIM >::GetDistributedVectorFactory(), DistributedBoxCollection< DIM >::GetHaloBoxOwnership(), GenericEventHandler< 11, MeshEventHandler >::HeadingsImpl(), AbstractCardiacProblem< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::Initialise(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::MetisLibraryPartitioning(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ParMetisLibraryNodeAndElementPartitioning(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning(), Timer::Print(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ReorderNodes(), GenericEventHandler< 11, MeshEventHandler >::ReportImpl(), CellId::serialize(), ExecutableSupport::ShowParallelLaunching(), and XdmfMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh().

| bool PetscTools::IsSequential | ( | ) | [static] |

- Returns:

- whether there is one process or not.

Definition at line 91 of file PetscTools.cpp.

References CheckCache(), mIsolateProcesses, and mNumProcessors.

Referenced by AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::AbstractCardiacTissue(), AbstractContinuumMechanicsSolver< DIM >::AllocateMatrixMemory(), CylindricalHoneycombMeshGenerator::CylindricalHoneycombMeshGenerator(), DistanceMapCalculator< ELEMENT_DIM, SPACE_DIM >::DistanceMapCalculator(), DistributedBoxCollection< DIM >::DistributedBoxCollection(), VertexBasedCellPopulation< DIM >::GetTetrahedralMeshUsingVertexMesh(), HoneycombMeshGenerator::HoneycombMeshGenerator(), AbstractBidomainSolver< ELEMENT_DIM, SPACE_DIM >::InitialiseForSolve(), DistributedBoxCollection< DIM >::IsInteriorBox(), NodeBasedCellPopulationWithParticles< DIM >::NodeBasedCellPopulationWithParticles(), PCTwoLevelsBlockDiagonal::PCTwoLevelsBlockDiagonalCreate(), AbstractNonlinearElasticitySolver< DIM >::SetKspSolverAndPcType(), VtkMeshWriter< ELEMENT_DIM, SPACE_DIM >::SetParallelFiles(), SetupMat(), VtkMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteFilesUsingMesh(), AbstractTetrahedralMeshWriter< ELEMENT_DIM, SPACE_DIM >::WriteNclFile(), NodeBasedCellPopulationWithParticles< DIM >::WriteVtkResultsToFile(), and NodeBasedCellPopulation< DIM >::WriteVtkResultsToFile().

| void PetscTools::ReadPetscObject | ( | Vec & | rVec, | |

| const std::string & | rOutputFileFullPath, | |||

| Vec | rParallelLayout = NULL | |||

| ) | [static] |

Read a previously dumped PETSc object from disk.

- Parameters:

-

rVec a vector rOutputFileFullPath where to read the matrix from rParallelLayout If provided, rMat will have the same parallel layout. Its content is irrelevant.

Definition at line 411 of file PetscTools.cpp.

References PETSC_DESTROY_PARAM.

| void PetscTools::ReadPetscObject | ( | Mat & | rMat, | |

| const std::string & | rOutputFileFullPath, | |||

| Vec | rParallelLayout = NULL | |||

| ) | [static] |

Read a previously dumped PETSc object from disk.

- Parameters:

-

rMat a matrix rOutputFileFullPath where to read the matrix from rParallelLayout If provided, rMat will have the same parallel layout. Its content is irrelevant.

- Todo:

- : #1082 work out appropriate nz allocation.

Definition at line 360 of file PetscTools.cpp.

References Destroy(), PETSC_DESTROY_PARAM, and SetupMat().

Boolean OR of flags between processes.

- Parameters:

-

flag is set to true on this process.

- Returns:

- true if any process' flag is set to true.

Definition at line 185 of file PetscTools.cpp.

References CheckCache(), mIsolateProcesses, and mPetscIsInitialised.

Referenced by AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::AbstractCardiacTissue(), BidomainProblem< DIM >::AnalyseMeshForBath(), DistanceMapCalculator< ELEMENT_DIM, SPACE_DIM >::ComputeDistanceMap(), BoundaryConditionsContainer< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::DefineConstantDirichletOnMeshBoundary(), AbstractBoundaryConditionsContainer< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::HasDirichletBoundaryConditions(), ReplicateException(), CardiacSimulationArchiver< PROBLEM_CLASS >::Save(), and DistanceMapCalculator< ELEMENT_DIM, SPACE_DIM >::UpdateQueueFromRemote().

| void PetscTools::ReplicateException | ( | bool | flag | ) | [static] |

Ensure exceptions are handled cleanly in parallel code, by causing all processes to throw if any one does.

- Parameters:

-

flag is set to true if this process has thrown.

Definition at line 197 of file PetscTools.cpp.

References EXCEPTION, and ReplicateBool().

Referenced by AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::AbstractCardiacTissue(), AbstractFunctionalCalculator< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::Calculate(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::CalculateBoundingBox(), DistributedTetrahedralMesh< ELEMENT_DIM, SPACE_DIM >::ConstructFromMeshReader(), CellMLToSharedLibraryConverter::ConvertCellmlToSo(), ExtendedBidomainTissue< SPACE_DIM >::CreateExtracellularConductivityTensors(), BidomainTissue< SPACE_DIM >::CreateExtracellularConductivityTensors(), ExtendedBidomainTissue< SPACE_DIM >::CreateGGapConductivities(), AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::CreateIntracellularConductivityTensor(), ExtendedBidomainTissue< SPACE_DIM >::CreateIntracellularConductivityTensorSecondCell(), ExtendedBidomainTissue< SPACE_DIM >::ExtendedBidomainTissue(), ReplicatableVector::Resize(), ElectrodesStimulusFactory< DIM >::SetCompatibleExtracellularStimulus(), AbstractCardiacProblem< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::Solve(), ExtendedBidomainTissue< SPACE_DIM >::SolveCellSystems(), and AbstractCardiacTissue< ELEMENT_DIM, SPACE_DIM >::SolveCellSystems().

| void PetscTools::ResetCache | ( | ) | [static] |

Reset our cached values: whether PETSc is initialised, how many processors there are, and which one we are.

Definition at line 58 of file PetscTools.cpp.

References mNumProcessors, mPetscIsInitialised, and mRank.

Referenced by CheckCache(), and PetscSetupUtils::ResetStatusCache().

| void PetscTools::SetupMat | ( | Mat & | rMat, | |

| int | numRows, | |||

| int | numColumns, | |||

| unsigned | rowPreallocation, | |||

| int | numLocalRows = PETSC_DECIDE, |

|||

| int | numLocalColumns = PETSC_DECIDE, |

|||

| bool | ignoreOffProcEntries = true, |

|||

| bool | newAllocationError = true | |||

| ) | [static] |

Set up a matrix - set the size using the given parameters. The number of local rows and columns is by default PETSC_DECIDE. SetFromOptions is called.

- Parameters:

-

rMat the matrix numRows the number of rows in the matrix numColumns the number of columns in the matrix rowPreallocation the max number of nonzero entries expected on a row A value of 0 is allowed: no preallocation is then done and the user must preallocate the memory for the matrix themselves. numLocalRows the number of local rows (defaults to PETSC_DECIDE) numLocalColumns the number of local columns (defaults to PETSC_DECIDE) ignoreOffProcEntries tells PETSc to drop off-processor entries newAllocationError tells PETSc whether to set the MAT_NEW_NONZERO_ALLOCATION_ERR. ** currently only used in PETSc 3.3 and later ** in PETSc 3.2 and earlier MAT_NEW_NONZERO_ALLOCATION_ERR defaults to false in PETSc 3.3 MAT_NEW_NONZERO_ALLOCATION_ERR defaults to true

Definition at line 267 of file PetscTools.cpp.

References IsSequential().

Referenced by AbstractContinuumMechanicsSolver< DIM >::AllocateMatrixMemory(), Hdf5DataWriter::ApplyPermutation(), Hdf5DataWriter::DefineFixedDimensionUsingMatrix(), HasParMetis(), OperatorSplittingMonodomainSolver< ELEMENT_DIM, SPACE_DIM >::InitialiseForSolve(), MonodomainSolver< ELEMENT_DIM, SPACE_DIM >::InitialiseForSolve(), ExtendedBidomainSolver< ELEM_DIM, SPACE_DIM >::InitialiseForSolve(), BidomainSolver< ELEMENT_DIM, SPACE_DIM >::InitialiseForSolve(), LinearSystem::LinearSystem(), NodePartitioner< ELEMENT_DIM, SPACE_DIM >::PetscMatrixPartitioning(), ReadPetscObject(), LinearSystem::SetPrecondMatrixIsDifferentFromLhs(), SimplePetscNonlinearSolver::Solve(), and AbstractNonlinearAssemblerSolverHybrid< ELEMENT_DIM, SPACE_DIM, PROBLEM_DIM >::VerifyJacobian().

Member Data Documentation

const unsigned PetscTools::MASTER_RANK = 0 [static] |

As a convention, we consider processor 0 the master process.

Definition at line 140 of file PetscTools.hpp.

Referenced by AmMaster().

bool PetscTools::mIsolateProcesses = false [static, private] |

Whether to pretend that we're just running many master processes independently.

Definition at line 124 of file PetscTools.hpp.

Referenced by AmMaster(), AmTopMost(), Barrier(), GetWorld(), IsIsolated(), IsolateProcesses(), IsSequential(), and ReplicateBool().

unsigned PetscTools::mNumProcessors = 0 [static, private] |

The total number of processors.

Definition at line 118 of file PetscTools.hpp.

Referenced by AmTopMost(), CheckCache(), GetNumProcs(), IsParallel(), IsSequential(), and ResetCache().

bool PetscTools::mPetscIsInitialised = false [static, private] |

Whether PETSc has been initialised.

Definition at line 115 of file PetscTools.hpp.

Referenced by Barrier(), IsInitialised(), ReplicateBool(), and ResetCache().

unsigned PetscTools::mRank = 0 [static, private] |

Which processors we are.

Definition at line 121 of file PetscTools.hpp.

Referenced by AmMaster(), AmTopMost(), GetMyRank(), and ResetCache().

The documentation for this class was generated from the following files:

- global/src/parallel/PetscTools.hpp

- global/src/parallel/PetscTools.cpp

1.6.2

1.6.2